Guess what? your VP of Product just asked for something visual to anchor tomorrow's board discussion on the Q3 roadmap. You have a PRD. You have bullet points. You have 16 hours and no designer availability. The pressure isn't about making slides look pretty. It's about translating a mountain of details into a single, coherent story that justifies months of work.

What you need isn't a better template. It's a clearer system. A well-crafted management plan isn't bureaucracy, it’s the shared consciousness of a team made tangible. It’s the instrument that turns a dozen individual workflows into a single, coordinated symphony. Most articles give you generic frameworks. This is different. We're dissecting the core logic behind the plans that prevent costly failures, turning abstract goals into concrete, shippable reality.

This article provides actionable frameworks you can adapt immediately. We will dissect eight distinct types of plans, from the initial Product Requirements Document (PRD) to the final Quality Assurance pass. For each management plan example, we’ll analyze its structure, highlight key insights, and offer concrete steps for applying it to your own team. You will leave with a clearer understanding of how to transform planning from a tedious chore into a strategic advantage that drives clarity and alignment.

1. Product Requirements Document (PRD) Management Plan

A PRD isn't a document, it's a treaty. It is a negotiated agreement between possibility and reality, a shared map for designers, engineers, and stakeholders navigating the fog of creation. A PRD management plan is the framework that turns this document from a static artifact into a dynamic source of truth. It outlines the problem, hypothesizes a solution, defines success, and specifies the deliverables, ensuring everyone builds the same thing for the same reason.

This process prevents the most expensive error in product development: building the wrong feature perfectly. It forces clarity on the "why" before a single line of code is written. For instance, according to the book Inspired by Marty Cagan, the best product teams use the PRD to explicitly state non-goals to prevent scope creep. This is a stellar management plan example because it codifies alignment.

Strategic Breakdown

Problem Framing: A great PRD begins with the user's struggle, not the company's wish. It clearly articulates the pain point with qualitative and quantitative data.

Success Metrics: It defines what victory looks like with measurable KPIs. For example, "Increase feature adoption by 15% within Q3" is better than "Improve user engagement." These metrics must link directly to analytics dashboards.

Scope Definition: This section includes detailed user stories, functional requirements, and, crucially, non-goals. Explicitly stating what you are not building is as important as defining what you are.

Design & Engineering Specs: It should contain wireframes or prototypes, acceptance criteria, and considerations for edge cases and error states. You can explore a detailed PRD for an AI-powered music curation feature to see these components in action.

Actionable Takeaways

Make it a Living Document: A PRD is not static. It should be updated as you learn from user feedback and testing.

Prioritize Ruthlessly: Use a clear framework like RICE or MoSCoW to prioritize features within the PRD.

Visualize the Flow: Don't just describe the feature; map it out. A fast, iterative workflow can take you from a PRD to a functional prototype in hours, exposing gaps in logic much faster than text alone.

Involve Your Team Early: The PRD should be a collaborative document, not a decree from the product manager. Co-authoring builds ownership and surfaces technical constraints early.

2. User Flow and Journey Mapping Management Plan

A feature isn't just a button, it's a path. A user flow or journey map is the cartographer's work of charting these paths, turning a series of screens into a coherent story of user intent and interaction. This plan isn't about aesthetics, it’s about momentum. It provides a visual framework that documents how users move through a product to achieve a goal, flagging decision points and uncovering the hidden friction causing drop-offs.

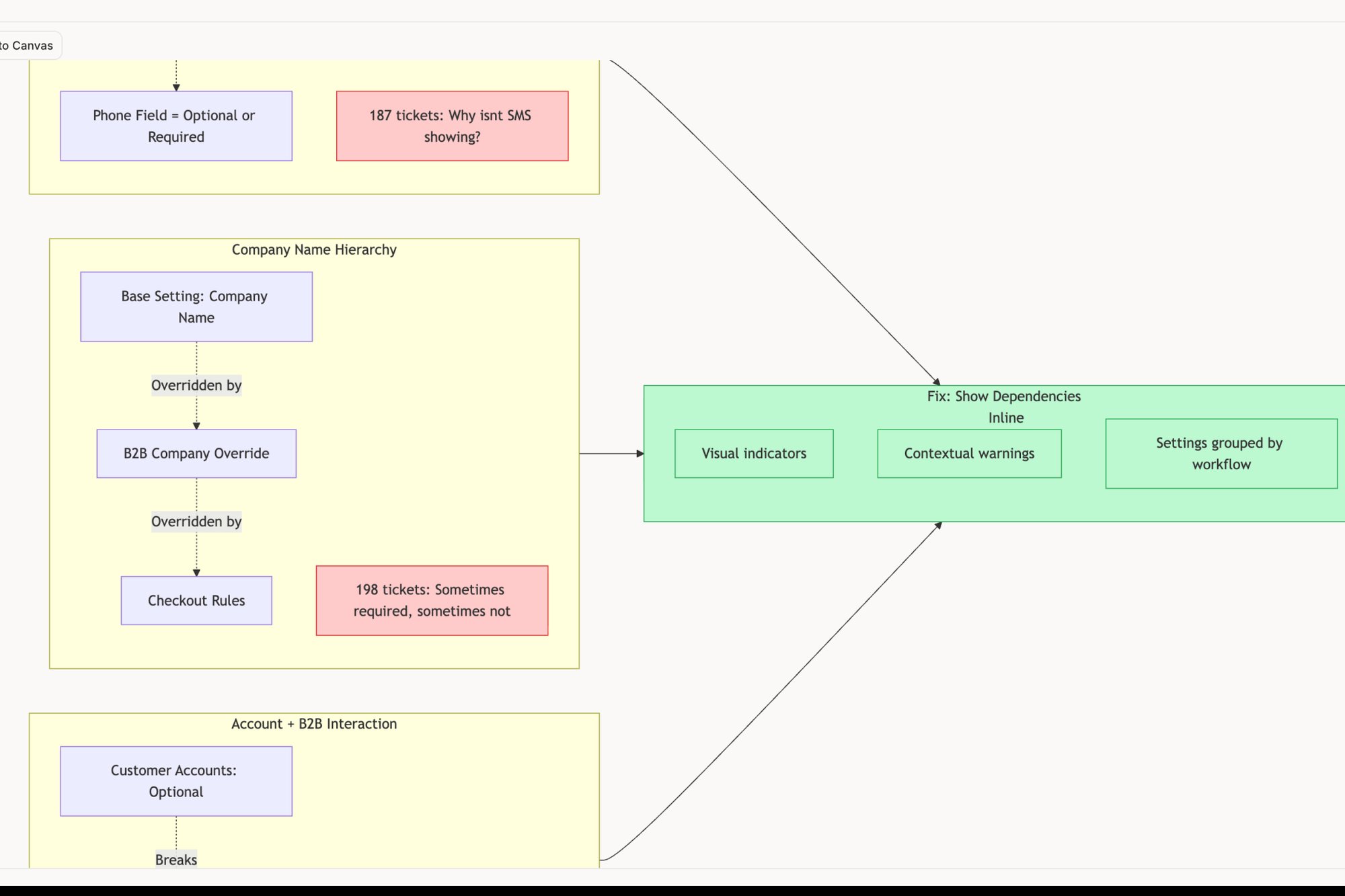

This plan moves beyond static wireframes to show the dynamic reality of user behavior. It forces teams to think in sequences, not just states. Last week I watched a PM spend an entire afternoon trying to explain a complex checkout sequence with words. A simple flow diagram would have taken 30 minutes and saved everyone hours of confusion. This is a powerful management plan example because it codifies empathy at a system level, ensuring a smooth experience even when things go wrong.

Strategic Breakdown

Persona & Scenario Definition: The map begins with a specific user (persona) trying to achieve a specific goal (scenario). For example: "A new project manager (persona) is trying to add a task for a developer (scenario)."

Touchpoint Mapping: Document every screen, button, email, and notification the user interacts with along the path. This should include both in-app and out-of-app touchpoints.

Pain Point Identification: Connect the flow to real data. Use analytics to pinpoint where users get stuck or abandon the process. Annotate these steps with qualitative insights from user interviews or support tickets.

Opportunity Highlighting: Each pain point is an opportunity for improvement. The map should explicitly call out areas for redesign, simplification, or new feature development to ease the user's journey.

Actionable Takeaways

Map the Unhappy Path: Don't just chart the ideal journey. What happens during network failures, permission errors, or invalid inputs? Mapping out the edge cases for a Dropbox file upload reveals the true complexity of a seemingly simple feature.

Create Different Maps for Different Users: A power user's journey looks very different from a first-time user's. Segment your flows by user persona to ensure the experience works for everyone.

Validate with QA and Engineering: Share your flow maps with QA teams. They are experts at finding untested scenarios and can help you build more resilient products.

Treat it as a Strategic Tool: A user journey map isn't just a design artifact; it's a strategic document that aligns the entire team on the user's reality. To dig deeper, you can explore the fundamentals of what is a user journey map and how it drives product decisions.

3. Design System Token Management Plan

A design system token is not just a hex code, it's a decision encoded. It is the atomic unit of your brand’s visual language, a single source of truth that defines everything from color-brand-primary to spacing-medium. A token management plan is the governance layer that prevents this system from collapsing into chaos. It provides a framework for creating, updating, and distributing these design decisions, ensuring every button and banner feels like it belongs.

Without this plan, you get design debt.

You get five different shades of "brand blue" and spacing that feels just a little bit off between products. This plan is the antidote to inconsistency at scale. For example, Salesforce’s Lightning Design System is a masterclass in token management, creating a universal grammar that allows thousands of developers to build cohesive experiences. This is an essential management plan example because it codifies consistency.

Strategic Breakdown

Token Naming & Hierarchy: Establish a clear, logical naming convention. A tiered system (e.g.,

global.color.blue.500->alias.color.interactive->component.button.primary.background) allows for both broad changes and specific overrides.Governance & Contribution Model: Define who can propose, approve, and publish token changes. Is it a centralized team or a federated model? Document the process for introducing new tokens or deprecating old ones.

Tooling & Distribution: Specify how tokens are managed (e.g., in Figma Tokens, Style-Dictionary) and distributed to different platforms (iOS, Android, Web). The goal is a single source of truth that automatically propagates everywhere.

Documentation & Usage Guidelines: A token is useless if no one knows how to use it. Documentation must explain the why behind each token. You can explore how to use design tokens to see how these concepts translate into practical application.

Actionable Takeaways

Automate Everything Possible: Connect your token library directly to your codebase. When a token is updated in Figma, it should automatically create a pull request in your repositories.

Version Your Tokens: Treat design tokens like software. Version them alongside product releases so you have a clear history of changes and can manage breaking updates gracefully.

Document the Rationale: Explain the "why" behind each token family. Why does your primary button use

blue-500? This context helps designers and engineers make better decisions when extending the system.Plan for Exceptions: No system is perfect. Create a documented process for "breaking the system" when a specific use case demands it. This prevents unofficial exceptions from becoming unmanaged design debt.

4. Quality Assurance and Test Case Management Plan

A Quality Assurance (QA) plan isn't a checklist, it's an immune system for your product. It’s the systematic process that detects vulnerabilities and ensures the core functionality remains healthy through every release cycle. This plan is the framework for defining how quality is measured and maintained, transforming testing from a reactive scramble into a proactive, integrated discipline.

A mature QA plan ensures that what you build is not only functional but also resilient. It’s the difference between shipping code and shipping confidence. A friend at a Series C company told me their biggest engineering delay last quarter wasn't a coding problem, it was a testing bottleneck. Why? Because the test cases were written after development was complete, revealing fundamental logic flaws that forced a major rewrite. This approach makes their QA process a powerful management plan example because it codifies reliability at the protocol level.

Strategic Breakdown

Test Strategy & Scope: Define what gets tested and how. This includes outlining the types of testing (functional, performance, security) and the environments (staging, production). Clarity here prevents redundant effort.

Coverage & Traceability: Map test cases directly back to product requirements or user stories. This creates a clear link between what was asked for and what was tested, ensuring no feature is left behind.

Resource & Environment Planning: Detail who is responsible for testing, what tools will be used (e.g., automated testing frameworks, bug tracking software), and what the specific server or device configurations are.

Defect Management: Establish a clear process for how bugs are reported, triaged, prioritized, assigned, and resolved. This includes defining severity levels to ensure the most critical issues are addressed first. To ensure your product meets the highest standards, delve into these essential quality assurance best practices.

Actionable Takeaways

Prioritize with Data: Don't test everything with equal rigor. Use analytics to identify the most critical user paths and focus your most intensive testing efforts there.

Automate the Repetitive: Manual regression testing is slow and error-prone. Automate your core regression suite to run continuously, freeing up your QA team to focus on more complex exploratory testing.

Make Accessibility a Standard: Integrate accessibility testing into your standard test plan, not as an afterthought. This ensures your product is usable by the widest possible audience from day one.

Generate Tests from Specs: Don't wait for a fully built feature to start writing tests. You can create comprehensive test cases directly from design specifications, catching logical flaws before code is written. A great example can be seen in these test cases for a scheduling feature.

5. A/B Testing and Experimentation Management Plan

An A/B test isn't a coin flip, it's a conversation with your user base at scale. It’s the process of isolating a single change, showing it to a segment of your audience, and letting their behavior declare the winner. An experimentation management plan is the rigorous framework that turns gut feelings into quantifiable data. It systematizes how you ask questions and measure the answers.

This structured approach prevents "innovation" from becoming a random walk. It ensures that every change, from button color to a complete onboarding flow, is a deliberate step toward a defined business goal. Companies like Netflix and Amazon didn't build their empires on lucky guesses. They built experimentation platforms that run thousands of concurrent tests, creating a Darwinian environment where only the fittest user experiences survive. This is a powerful management plan example because it replaces opinion with evidence.

Strategic Breakdown

Hypothesis Formation: Every test begins with a clear, falsifiable hypothesis. "Changing the signup button from blue to green will increase conversions" is a guess. "Based on heatmaps showing low engagement with our current blue CTA, we believe a higher-contrast green button will increase signup form submissions by 5% over 14 days because it improves visual salience." That's a hypothesis.

Success Metrics: Define your primary metric before the test starts. Also, define "guardrail" metrics to monitor for unintended negative consequences, like a drop in session duration or an increase in support tickets.

Audience Segmentation: Clearly define who will see the test. Is it for new users in North America on iOS? Or for power users active for more than 90 days? Proper segmentation ensures clean results.

Result Analysis & Documentation: Plan how you will determine statistical significance. Crucially, document the results whether the test wins, loses, or is inconclusive. A "failed" test that prevents you from shipping a harmful change is a huge success.

Actionable Takeaways

Ground Hypotheses in Reality: Use analytics, user session recordings, and qualitative feedback to find real problems before you start proposing solutions.

Run One Test at a Time: Avoid running overlapping tests on the same page or user flow. This contaminates your data, making it impossible to know which change caused the observed behavior.

Calculate Sample Size First: Use a sample size calculator to determine how many users you need per variant to achieve statistical significance. Running a test for too short a time is a common error.

Build a Knowledge Base: Create a central repository for all experiment results. Detailing the hypothesis, variants, and outcomes creates a powerful institutional memory that prevents teams from re-running old tests.

6. Product Roadmap and Feature Release Management Plan

A product roadmap isn't a project plan, it's a statement of intent. It is a strategic narrative that communicates where the product is headed and, more importantly, why. A roadmap and feature release management plan is the system that keeps this narrative coherent. It connects high-level company vision to the granular details of a specific feature release, ensuring every sprint contributes to a larger strategic goal.

This plan translates strategy into execution. It’s the connective tissue between the C-suite’s quarterly goals and an engineer's daily tasks. For instance, Notion's public roadmap doesn't just list features; it communicates themes and problems they are solving. This context builds trust and manages expectations with their user base. This is a powerful management plan example because it aligns internal teams and external customers around a shared vision.

Strategic Breakdown

Vision & Strategic Pillars: The plan must start with the overarching product vision and key strategic themes for the next 6-12 months. A pillar might be "Improve Team Collaboration" or "Increase New User Activation."

Prioritization Framework: It must define how features are chosen. This isn't guesswork, it’s a system. Whether using RICE (Reach, Impact, Confidence, Effort) or weighting features against company OKRs, the framework should be explicit.

Tiered Timelines: Roadmaps should operate on multiple horizons. Use broad strokes for the long-term (e.g., "Next," "Later"), quarterly goals for the mid-term, and sprint-level plans for the immediate future. This builds in flexibility.

Release Cadence & Communication: Define the process for shipping. This includes QA handoffs, phased rollouts (e.g., internal beta, 10% of users), and the communication plan for stakeholders and customers for each release.

Actionable Takeaways

Focus on Problems, Not Features: Frame roadmap items as "user problems to solve" instead of "features to build." This encourages creative solutions and keeps the focus on customer value.

Communicate the "Why": Never present a roadmap without the rationale. Explain the data or user research that justifies each priority. Context prevents the roadmap from feeling like a random wish list.

Map Dependencies Early: Before committing a feature to a quarter, identify its dependencies across teams. A quick dependency mapping session can prevent significant downstream delays.

Review and Adapt Quarterly: A roadmap is a living document, not a stone tablet. Hold a formal review each quarter to assess progress, incorporate new learnings, and adjust priorities.

7. UX Review and Design QA Management Plan

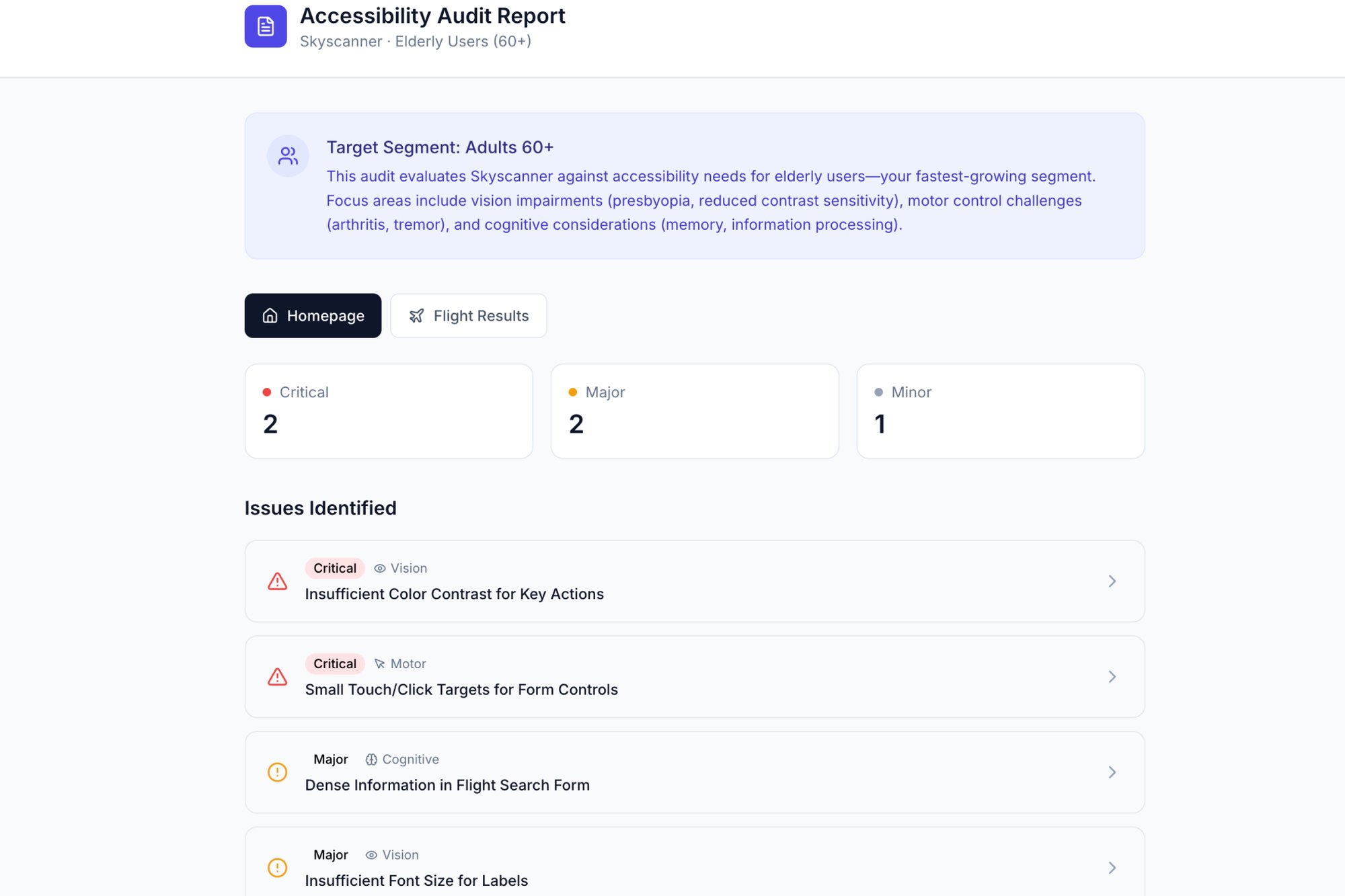

A design isn’t finished when it's pixel-perfect in Figma, it's finished when it survives contact with reality. A UX Review and Design QA plan is the systematic filter between a designer’s intent and a developer’s implementation. It’s a formal process to catch inconsistencies, usability flaws, and accessibility gaps before they become cemented in code. This framework ensures that what was promised in the prototype is what actually ships.

This management plan example transforms design handoff from a hopeful toss over the wall into a rigorous inspection. It codifies quality control. For instance, Airbnb’s legendary design review process ensures every new feature feels cohesive with the entire platform, protecting brand identity and user trust with every commit. The basic gist is this: good design QA makes it easier to do the right thing than the wrong thing.

Strategic Breakdown

Codified Criteria: The plan must move beyond "does it look good?" It uses a concrete checklist based on the design system. Does it use the correct spacing tokens? Is the component variant appropriate for this context?

Accessibility as a Mandate: This isn't an afterthought, it's a core checkpoint. The plan specifies standards (like WCAG 2.1 AA), requiring checks for color contrast, keyboard navigation, and screen reader compatibility.

Multi-Disciplinary Review: A great design QA plan includes not just designers but also engineers and product managers. Engineers spot implementation hurdles early, while product managers ensure the final design still solves the original user problem.

Documented Decisions: Every review's findings and the resulting changes must be documented. This creates a rationale log, preventing the same debates from happening. You can see how an accessibility audit report for Skyscanner would feed directly into this process.

Actionable Takeaways

Automate the Obvious: Use plugins and automated tools to check for simple things like color contrast, broken design tokens, or accessibility basics. This frees up human reviewers for more nuanced usability issues.

Build a Review Checklist: Create a living checklist template specific to your product. Include items for brand consistency, component usage, copy clarity, interaction states, and accessibility.

Review in the Right Environment: Don't just review static Figma screens. The most critical QA happens on a staging server or a live preview, where you can actually interact with the feature.

Timebox the Feedback Loop: Set clear deadlines for review cycles. A design review shouldn't be an indefinite debate. Provide feedback, decide on changes, and move forward.

8. Analytics and Data-Driven Decision Management Plan

Data without a plan is just noise. An analytics management plan is the instrument that tunes this noise into a signal. It's a framework that governs how a team collects, interprets, and acts on user behavior data, transforming gut feelings into quantifiable hypotheses. This plan ensures that every product decision is a calculated bet with a defined way to measure its success, rather than a shot in the dark.

This process prevents teams from mistaking activity for progress. It provides a shared language of metrics and a cadence for review. Why does this matter at scale? Because without a plan, every team defines "success" differently, leading to strategic drift. A good analytics plan aligns incentives. For instance, Airbnb's relentless A/B testing culture is legendary: every design change is measured against its impact on bookings. This approach represents an excellent management plan example because it institutionalizes learning.

Strategic Breakdown

Define Your North Star: Start with one singular, overarching metric that captures the core value your product delivers to customers. All other metrics should ladder up to this North Star Metric (NSM).

Instrument Key Funnels: Map the critical user journeys, like activation and retention. Define key performance indicators (KPIs) for each step and ensure your analytics tools are capturing this data accurately. For instance, you could track the setup flow for a Shopify checkout experience to pinpoint drop-off points.

Establish a Decision Cadence: Document who reviews which dashboards, how often, and what actions are triggered by specific data thresholds. This could be a weekly metrics review or automated alerts for anomalies.

Standardize Reporting: Create a central, accessible dashboard that serves as the single source of truth for product health. This democratizes data and aligns everyone on the same set of facts.

Actionable Takeaways

Tie Metrics to Objectives: Every metric you track should directly connect to a business or user goal. If it doesn't, it's a vanity metric.

Segment Your Users: Don't look at averages. Use cohort analysis to understand how different user segments behave over time, revealing patterns that averages hide.

Master the Process: Building a culture around analytics requires more than just tools. A core component of an effective analytics plan is the ability to master data driven decision making.

Close the Loop: The plan isn't complete until it specifies how insights translate into action. Each review meeting should end with clear next steps, whether it's a new A/B test or a user research initiative.

From Blueprint to Reality

We've dissected project plans and untangled stakeholder maps. Each management plan example served not as a rigid cage, but as a compass. A compass doesn't steer the ship, it simply provides a fixed point of reference, allowing the captain to navigate changing winds with confidence. That is the true function of these documents: they are instruments for confident navigation.

The common thread woven through these plans is a commitment to foresight. It’s the practice of thinking through dependencies before they become blockers. A friend at a SaaS company once described their planning process not as creating a document, but as "pre-loading conversations." Every section of a well-crafted plan is a conversation you’ve had with your future self, your team, and your leadership, recorded for clarity. This is the shift from reactive problem-solving to proactive system-building.

Your Next Step: From Passive Reading to Active Planning

Seeing a management plan example is one thing. Building one under pressure is another entirely. The temptation is to treat these plans as administrative burdens.

This is a critical error.

The value isn't in the finished PDF, it's in the shared understanding forged during its creation. As Dwight D. Eisenhower famously noted, "Plans are worthless, but planning is everything." The act of planning forces alignment and exposes assumptions. In short, it builds the connective tissue a team needs to execute effectively.

Your immediate takeaway should be to pick one area of friction in your current workflow. Is it scope creep? Unclear QA standards? Find the corresponding plan we discussed and create a "minimum viable version."

Start with these three questions:

What is the single biggest ambiguity this plan could resolve for my team right now?

Who are the three most critical people that need to agree on this plan for it to work?

What would the simplest possible version of this document look like?

A plan that is used is infinitely more valuable than a perfect one that collects dust. A clear set of test cases, like those generated for the Wise Card Freeze flow, doesn't just improve quality, it reduces the back-and-forth between QA and engineering by defining "done" upfront. It’s a tool for efficiency, not bureaucracy. Don't just admire the examples. Go build the clarity your team is waiting for.

Tired of static documents that go stale the moment you hit save? Figr helps you create living, breathing plans from PRDs to user flows and test cases, all powered by AI to accelerate your process. Turn your initial ideas into actionable artifacts at Figr and make your next management plan the start of a conversation, not the end of one.