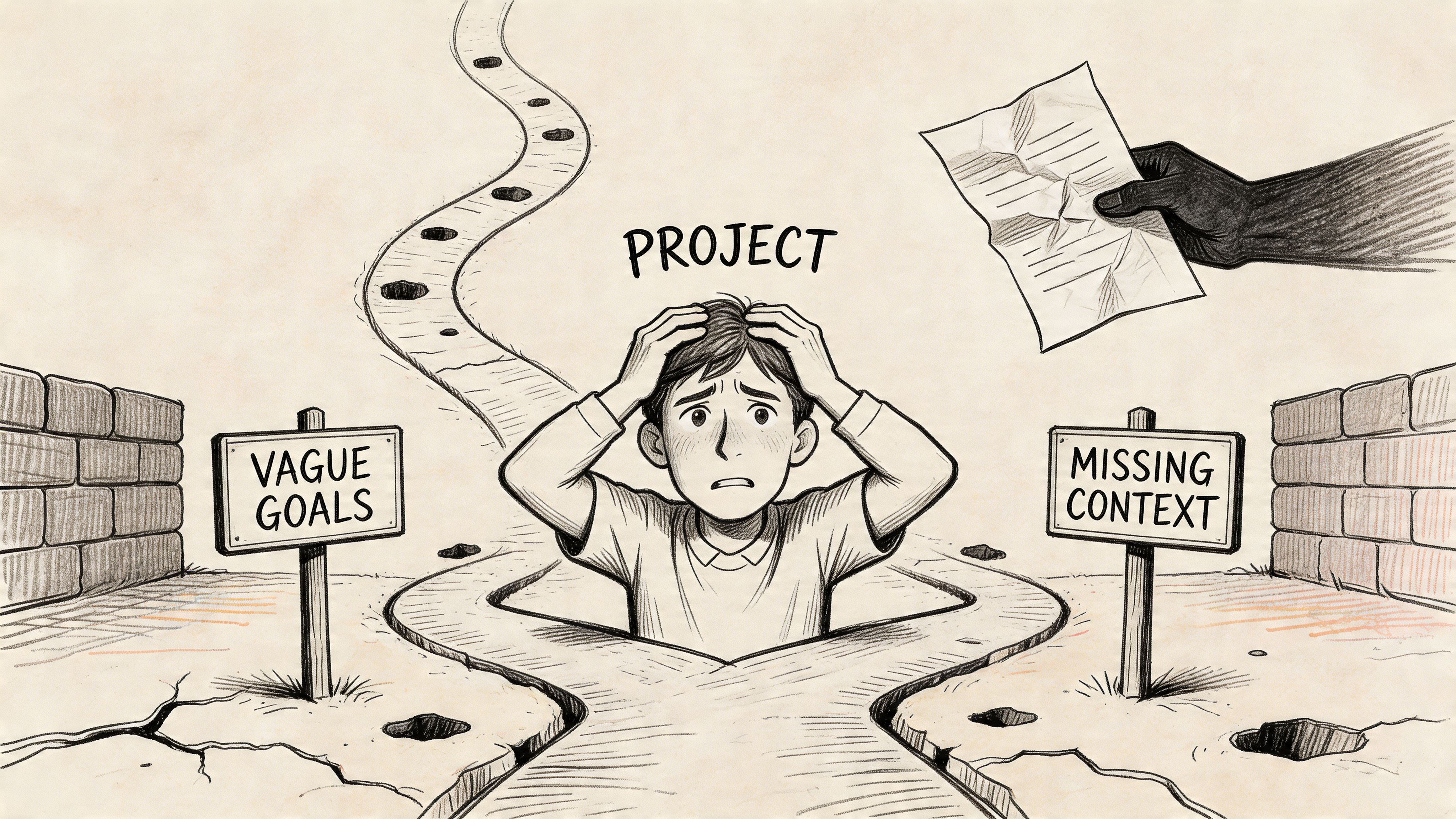

Monday morning, a week after launch, the room goes quiet in a way every product team recognizes. The dashboard looks wrong. Support has a growing thread. Engineering is talking about edge cases, design is pointing at the intended flow, and the PM is replaying kickoff notes trying to find the moment the project drifted.

Usually, that moment wasn't dramatic.

It was a sentence nobody challenged. A goal that sounded clear until three disciplines interpreted it three different ways. A success metric that lived in someone's head instead of the project doc. If you've ever lived through the scramble that follows a shaky release, you know the pain isn't just technical. It's the feeling that a team did serious work in parallel, only to discover they were building adjacent versions of the same idea.

That's why the design brief definition matters more than is often acknowledged. Not as paperwork. Not as a ritual artifact. As the first alignment mechanism a project gets.

The Ghost of a Bad Launch

A bad launch has a ghost. You can feel it in the retrospective.

The feature works, technically. Users can click through it. Nothing is on fire in the strict engineering sense. But adoption is flat, QA is still finding strange corners, and every function on the team has a different explanation for what went wrong. Marketing thought the release was about discoverability. Design optimized for clarity. Engineering optimized for delivery against a narrow implementation. Product thought the mission was retention.

All of those can be reasonable choices.

Together, they can still ship the wrong thing.

I think of this as Project Drift. Not a dramatic collapse, just a slow veering off course caused by untested assumptions at kickoff. A PM says, "We need to simplify onboarding," and nobody asks whether simplify means fewer steps, fewer decisions, faster completion, or lower support burden. The team fills in the blanks from experience. That feels efficient at first. Later, it becomes expensive.

A friend at a Series C company told me about a launch review where the team spent most of the meeting arguing over the original intent of the feature. That was the fundamental failure. When a project needs archaeology after release, the kickoff wasn't strong enough.

You can see versions of this pattern in almost every account of a messy new product launch process. The visible issue might be churn, confusion, or rework. The hidden issue is usually alignment debt.

When the problem isn't a bug

Teams often diagnose launch pain as a delivery problem because delivery is easier to see. But many launch failures start before a single screen gets designed.

Common signs include:

- Shifting success criteria: The team debates outcomes late because nobody locked them early.

- Scope inflation: Nice-to-haves subtly become requirements.

- Conflicting assumptions: Different functions optimize for different users, moments, or constraints.

- Weak handoffs: Designers think in flows, engineers think in tickets, and nobody owns the translation layer.

A project rarely falls apart all at once. It drifts in small, polite misunderstandings.

That drift is exactly what a design brief is meant to prevent. At its best, it gives the team a shared definition of the problem, the boundaries, and the evidence of success before production pressure starts flattening nuance.

The brief as anchor

Many teams still treat the brief like a formality. Fill it out. Share it in Slack. Move on.

That approach misses the point. A useful brief doesn't exist to document consensus that already happened. It exists to force the hard conversations that haven't happened yet.

When the brief is strong, kickoff feels slower for a day and faster for the next six weeks. When the brief is weak, kickoff feels quick and the rest of the project pays for it.

What a Design Brief Really Is

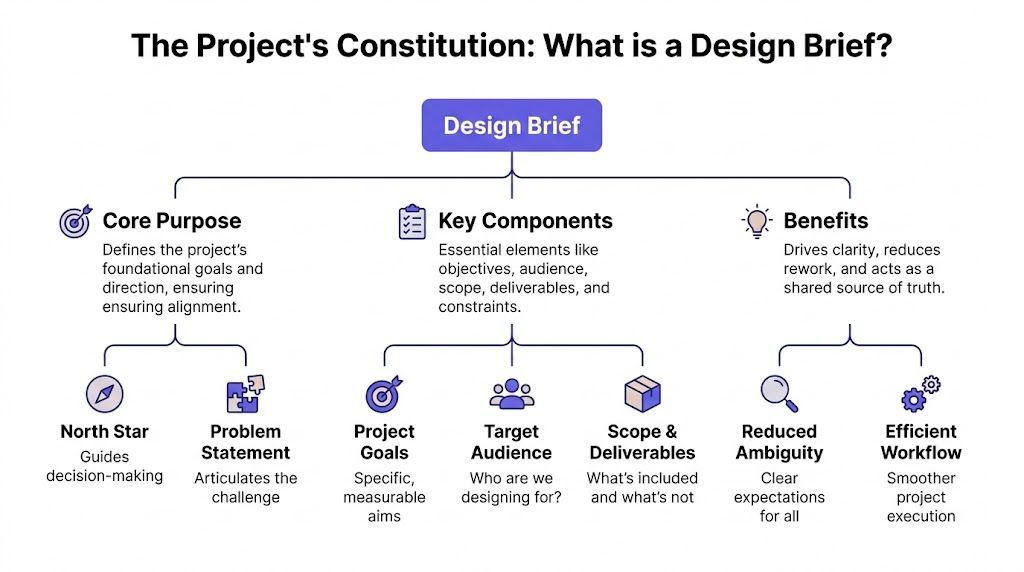

A design brief is often described as a summary document. That's technically true and strategically incomplete.

A better design brief definition is this: a project constitution. It's the foundational agreement that tells the team what problem it's solving, what constraints apply, what success looks like, and how to make trade-offs when pressure arrives. Constitutions matter because they settle arguments before they happen. Good briefs do the same.

The basic gist is this: a design brief isn't there to capture everything. It's there to capture the right things so teams stop guessing.

A 2018 Design Society paper, Design Briefs: Is There a Standard?, found that briefs range from a single line to multi-page documents and that no universal standard exists. The same research identifies seven key elements that belong in a brief: project overview and background, client narrative, desired outcomes, target audience, constraints, deliverables, and success metrics.

That list is useful because it gives structure without pretending one template fits every team.

The non-negotiable elements

This explains what each part is really doing in practice.

| Element | What it answers | Why it matters |

|---|---|---|

| Project overview and background | Why are we doing this now? | Prevents teams from designing in a vacuum |

| Client narrative | What does the stakeholder believe is happening? | Surfaces bias, urgency, and political context |

| Desired outcomes | What should change if this works? | Keeps effort tied to outcomes, not artifacts |

| Target audience | Who is this for, specifically? | Stops teams from building for an imagined average user |

| Constraints | What can't move? | Makes trade-offs explicit early |

| Deliverables | What exactly must be produced? | Removes ambiguity at handoff |

| Success metrics | How will we know it worked? | Turns opinions into testable judgment |

Most bad briefs are missing one of these. The worst briefs contain all seven in name but not in substance.

For example, "Target audience: enterprise users" isn't useful. "Constraints: standard timeline" isn't useful either. Vague fields create fake confidence, which is often more dangerous than obvious gaps.

Why SaaS teams need more than a creative brief

For SaaS products, the brief has to go beyond brand direction and feature aspiration. It also needs technical requirements.

An engineering design brief should specify functional requirements, compliance expectations, and delivery needs. The guidance from Ripcord Design's engineering brief overview is especially relevant here: the brief needs to state primary functions, secondary features, applicable standards, and required deliverables so teams don't discover technical reality after concept work is already done.

This is what I mean. A PM who writes "improve permissions management" has not written a brief. A PM who clarifies required system behavior, known platform constraints, accessibility expectations, and what assets need to come out of the work has given the team something they can build against.

A strong brief for software usually includes details like:

- Functional expectations: What the feature must do, not just what it should feel like.

- System constraints: Existing APIs, legacy patterns, data dependencies, and performance expectations.

- Compliance requirements: Accessibility standards or regulated workflows, where relevant.

- Output expectations: Wireframes, prototypes, annotated flows, acceptance criteria, or testable states.

If the problem itself still feels fuzzy, starting from a sharp problem statement framework usually improves the brief immediately. Teams that can't state the problem cleanly tend to compensate by overspecifying the solution.

Practical rule: If your brief can't resolve a trade-off between speed, scope, and user clarity, it isn't a constitution yet. It's a note dump.

What a brief is not

It isn't a PRD in miniature.

It isn't a mood board with deadlines.

It isn't a stakeholder wish list cleaned up for presentation.

And it definitely isn't the document you write after decisions are already locked. By that point, the brief has stopped being a thinking tool and turned into compliance theater.

The best briefs do something simpler and harder. They give a team enough shared context to make good decisions when reality gets messy.

The Economic Case for a Great Design Brief

Teams often talk about briefs as if they're a design hygiene issue. They're not. They're a capital allocation issue.

Every unclear requirement creates a small option for rework later. In software, rework rarely arrives politely. It shows up as revised flows, fresh engineering estimates, new QA cycles, and awkward stakeholder meetings where everyone agrees the confusion "makes sense in hindsight." The brief is where you either buy down that risk early or finance it at a much higher rate later.

The strongest clean data point here is straightforward: well-crafted design briefs can reduce costly revisions and delays by up to 40%, and misalignment affects 70% of creative projects without them, according to Capterra's design brief explainer.

That matters because revisions don't cost just one team. They trigger a chain reaction.

Where the money actually goes

Leaders often underestimate the cost of a weak kickoff because they look for obvious waste. They count reworked screens and missed dates. They don't count the quieter losses.

A weak brief burns budget in at least four places:

- Design churn: Teams produce variants to answer questions the brief should have settled.

- Engineering ambiguity: Developers build to inferred intent, then rebuild once intent gets clarified.

- QA expansion: Test cases multiply when edge cases weren't named early.

- Stakeholder drag: Review cycles become negotiation sessions instead of decision sessions.

None of this looks dramatic in isolation. Together, it slows shipping and makes planning unreliable.

This is also why good product teams study concrete human-centred design examples, not just templates. The strongest examples usually show the same pattern: teams make better design decisions when the original brief ties user context to business intent instead of treating design as decoration.

Alignment work is not overhead

I've seen PMs defend a sloppy kickoff in the name of speed. It almost never holds up under scrutiny.

If a team spends extra time sharpening scope, naming constraints, and agreeing on success, that isn't delay. It's preemptive cost control. The brief is one of the few places where a few hours of clarity can prevent weeks of downstream argument.

A simple way to frame it for executives is this:

| Weak brief behavior | Likely downstream effect |

|---|---|

| Unclear goals | Teams optimize for different outcomes |

| Soft scope boundaries | Nice-to-haves creep into committed work |

| Missing success metrics | Reviews become opinion-driven |

| Unnamed constraints | Late-stage technical or compliance surprises |

The economics get even clearer when you look at planning tools. If you're trying to justify alignment time internally, a structured ROI template for product work helps translate "we need a better brief" into language finance and leadership teams respect.

A useful walk-through on this mindset sits below.

The real trade-off

The trade-off isn't speed versus process.

It's brief clarity now versus expensive interpretation later.

Teams that resist writing strong briefs usually think they're avoiding bureaucracy. What they're often avoiding is the discomfort of forcing agreement early. But that discomfort doesn't disappear. It just waits until more people are involved and more work has already been done.

Common Design Brief Pitfalls

Last year, I watched a PM present a brief that was a masterpiece of compromise. Every stakeholder could find their own priority inside it. Which meant nobody had made a clear decision.

The document looked polished. It was also dangerous.

That kind of brief is common because teams assume the main risk is leaving something out. In practice, another risk is worse: putting contradictory things in and calling it alignment. A brief that promises speed, depth, flexibility, simplicity, and broad enterprise configurability without ranking them isn't neutral. It's evasive.

The most overlooked problem sits even earlier. Research highlighted in this brief analysis resource points to a critical gap in most design brief definitions: they explain what a brief contains, but offer very little guidance on how to analyze one. That's not academic nitpicking. It's the reason many teams read a brief, nod, and still walk away with incompatible interpretations.

The brief isn't the end of thinking

A brief should start a conversation, not close it.

When teams treat it as a final instruction sheet, they stop doing the work that matters most at kickoff. They don't separate explicit requirements from implied ones. They don't ask which assumptions are fragile. They don't identify where a phrase like "enterprise-ready" will mean one thing to sales, another to design, and a third to engineering.

I see four recurring failure modes.

- Weaponized vagueness: The brief uses broad language so stakeholders can agree without resolving tension. "Intuitive," "efficient," and "premium" are usual suspects.

- Metric theater: Success metrics sound measurable but aren't decision-ready. "Improve usability" doesn't tell a team what to optimize.

- Solution-first framing: The brief prescribes interface ideas before the team has aligned on the problem.

- Unexamined implications: A simple request implicitly carries operational complexity, but nobody names it early.

What good analysis looks like

Reading a brief well is a design and product skill in its own right. The team should interrogate it.

A useful review sounds like this:

| Question | What it exposes |

|---|---|

| What problem is explicit? | The stated project intent |

| What requirement is implied? | Hidden expectations or assumptions |

| Which term could different teams interpret differently? | Future rework triggers |

| What is missing that a QA lead would need? | Downstream handoff gaps |

| What trade-off is being avoided? | Political or strategic ambiguity |

That last question is more significant than often realized. Many briefs fail because they hide the central trade-off. The team is really choosing between flexibility and speed, or breadth and depth, or configurability and ease of use. If the brief doesn't name the trade-off, the project will name it later through conflict.

Watch for this: if every stakeholder says "yes" immediately, the brief may be too vague to be useful.

Silent killers inside polished documents

Some briefs fail loudly. Others fail elegantly.

The polished ones are often more dangerous because they create the feeling of rigor. They have headings, neat language, and a reassuring amount of detail. But they still leave the team unable to answer basic questions under pressure.

A brief is in trouble when:

- The problem statement describes a feature request, not a user or business issue

- The audience is broad enough that almost any decision can be justified

- The scope includes likely edge cases but doesn't acknowledge them as such

- The success measure depends on data the team can't access or define cleanly

- The deliverables are named, but the decisions those deliverables must support are not

This is why strong teams don't just ask, "Did we write the brief?" They ask, "Did we pressure-test it?"

Design Brief Examples for SaaS Teams

A SaaS team can spend six weeks "building RBAC" and still arrive at kickoff problems they should have resolved on day one.

I've seen this happen more than once. Sales sells enterprise readiness. Support wants fewer permission tickets. Security wants tighter controls. Design wants an admin flow people can readily complete. Engineering sees a permissions model that touches half the product. If the brief treats all of that as one tidy feature request, the team pays for the ambiguity later through rework, exceptions, and launch delays.

That is why a good brief acts less like a spec and more like a project constitution. It defines what the team is solving, which trade-offs are acceptable, and what evidence will count as success when pressure rises.

Example 1. Role-based access control for a B2B product

Below is a brief strong enough to survive real cross-functional scrutiny.

Project overview

Enterprise accounts need admins to delegate operational tasks without granting full workspace access. Today, a small group of admins becomes a bottleneck for routine work, which slows teams down and increases support load.Problem statement

The current permission model is too coarse. Admins either grant more access than they want or invent manual workarounds outside the product.Desired outcome

Let admins create and assign custom roles by responsibility, while keeping setup clear for first-time account owners and preserving trust in account security.Target audience

Primary users are workspace admins at mid-market and enterprise companies. Secondary users are contributors with limited access who need clear feedback about what they can view, edit, or request.Constraints

The solution must work with the current permissions API. Existing default roles must remain intact for current accounts. New settings surfaces must meet accessibility requirements. Auditability matters because account changes may be reviewed later by IT or compliance teams.Deliverables

Permission architecture, settings IA, role creation and assignment flows, system states, empty and error states, annotated prototype, event instrumentation plan, acceptance criteria, and QA scenarios for edge cases such as inherited permissions and revoked access.Success metrics

Reduced admin bottlenecks, fewer support requests tied to access issues, and successful role setup by new account owners without assistance.

What makes this brief useful is not the amount of detail. It is the kind of detail.

It names the economic risk. If admins cannot delegate safely, the company absorbs that cost through support tickets, slower onboarding, stalled enterprise deals, and custom promises made by sales. It also names the design risk. A highly flexible role builder may satisfy edge cases but still fail if admins cannot predict the consequences of a permission change.

That trade-off should be explicit. Flexibility versus clarity is often the core decision in RBAC work.

| Brief component | Weak version | Strong version |

|---|---|---|

| Pain point | Customers need permissions | Admins cannot delegate safely without creating risk or manual work |

| Goal | Improve enterprise readiness | Reduce admin bottlenecks while preserving clarity and control |

| Constraint | Use current system | Work with the current permissions API and preserve existing defaults |

| Output | New settings screens | Decision flows, states, instrumentation, acceptance criteria, and QA scenarios |

Example 2. Trial-to-paid conversion work

Growth projects fail for a different reason. The brief often starts with a dashboard drop instead of a real diagnosis.

"Improve conversion" is not enough. A stronger brief states where the drop occurs, which user segment matters, and what the team will not change during this cycle.

Project overview

Self-serve trial users are reaching activation milestones but too few are converting to paid plans at the end of the trial period.Problem statement

Users understand the product's core value during the trial, but pricing, plan differences, and upgrade timing create hesitation at the purchase step.Desired outcome

Increase paid conversion from qualified trial accounts by improving plan selection, upgrade messaging, and purchase confidence without increasing refund or support volume.Target audience

Product-qualified trial users at small and mid-sized companies. Secondary audience is internal sales, which needs cleaner signals about which accounts require human follow-up.Constraints

Billing infrastructure will not change this quarter. Pricing strategy is out of scope. Any UX changes must preserve localization support and legal copy requirements.Deliverables

Funnel diagnosis, revised upgrade path, pricing page decision support, lifecycle messaging recommendations, experiment plan, and tracking requirements.Success metrics

Higher conversion among qualified trials, lower abandonment at the upgrade step, and no increase in billing-related support contacts.

This version does something a lot of SaaS briefs miss. It protects the team from solving the wrong problem. If billing architecture and pricing are fixed, the team knows not to burn two weeks debating changes that cannot ship. That saves time, but what's more, it saves political energy.

For early framing, a product canvas template helps teams get the problem, user, constraints, and outcome onto one page before the full brief hardens into a document people stop questioning.

Teams can also use AI tools for product managers to summarize research, cluster interview notes, or draft first-pass requirements. That work is useful. It does not replace judgment. The PM still has to decide which assumptions are safe, which metrics matter, and which trade-off the team is making.

Good design brief examples share one trait. Each sentence removes a plausible misunderstanding before it turns into design churn, engineering rework, or a launch that technically ships but misses the business case.

The Product Manager's Design Brief Checklist

Most PMs don't need another template. They need a better filter.

Before sending a brief, run it through a pressure test. Not a spelling check. A misinterpretation check. The point is to find where a designer, engineer, researcher, or QA lead might fill in blanks differently from you.

The pre-flight questions

Use these questions before kickoff.

Can a new teammate explain the problem without repeating my proposed solution?

If not, the brief is solution-heavy and problem-light.Does each success metric include a clear measure and timeframe?

If the answer is no, you'll get subjective reviews later.Could an engineer identify core dependencies from this document alone?

If they need a meeting just to uncover system constraints, the brief isn't ready.Could a QA lead derive meaningful test scenarios from it?

Missing states, unclear permissions, and vague edge cases usually show up here first.Have I stated what is out of scope?

Scope doesn't become clear by describing what matters. It becomes clear by naming what won't be addressed.What phrase in this brief is most likely to be interpreted three different ways?

Rewrite that phrase before anyone starts work.Which trade-off have I avoided naming?

Every serious project contains one.

A sharper way to review

I like to score briefs on three dimensions.

| Dimension | What to ask |

|---|---|

| Clarity | Would two different readers reach the same understanding of the problem and outcome? |

| Buildability | Can design, engineering, and QA each act on this without inventing key context? |

| Decision quality | Does this document help the team resolve trade-offs under pressure? |

If a brief scores weakly on any one of those, kickoff isn't complete.

PMs are also starting to use structured workflows and AI tools for product managers to tighten early thinking. That's useful, with one caveat. AI can speed up drafting, but it can't rescue a team that hasn't agreed on the actual problem. Fast synthesis is only valuable when the source context is real.

The test most teams skip

Read the brief once as if you're design. Then again as if you're engineering. Then again as if you're support or QA.

If the document changes meaning as you switch roles, that's the gap to fix before kickoff.

From Static Document to Live Context with Figr

Traditional briefs have one structural problem that no template fully solves. They go stale.

The PM writes the document on Monday. Analytics shift. The Figma library changes. Engineering learns about a dependency. Customer evidence evolves. The brief is still technically accurate in parts, but it has stopped being the most current source of truth. That gap is where teams start relying on memory, side conversations, and screenshots pasted into chat.

A better model is emerging: the brief as live context, not static text.

What changes when context stays connected

When the brief is connected to the actual product, a few chronic problems get smaller.

- Reality replaces assumption: The team starts from the current product experience, not a remembered version of it.

- Design systems stay grounded: Tokens, patterns, and component logic are pulled from the source instead of recreated from screenshots.

- Analytics can inform the brief directly: Funnel issues and drop-offs are easier to tie to the project intent.

- Artifacts stay aligned: PRDs, flows, edge cases, and prototypes don't have to be manually reconciled across tools.

The old brief was mostly descriptive. Critically, the new version can become diagnostic.

Why this is the next step

The design brief definition is changing in practice, even if many teams still use the old term. The document isn't disappearing. It's becoming a control layer that sits closer to the product itself.

That's why an AI design tool for product teams is interesting in this context. Not because AI makes briefs magically better, but because context-aware systems can reduce the manual drift between what the product is, what the team thinks it is, and what the project says it should become.

The future brief won't win by being longer. It will win by being more connected to reality.

For product leaders, that's the meaningful shift. The brief stops being a static kickoff artifact and starts acting more like a living operational model for the work.

The Brief is the Beginning

A kickoff goes sideways in familiar ways. Design explores one direction, engineering estimates another, and the PM discovers in week three that success was never defined tightly enough to call a hard trade-off. The brief did not fail because it was missing a section. It failed because it did not give the team a constitution for the work.

That is the point.

A strong brief does not remove debate. It raises the quality of debate. Teams still disagree. They should. The difference is that the disagreement happens inside a shared frame: the problem is clear, the constraints are visible, the success metrics are specific, and the open questions are named before they turn into expensive surprises.

That is why the design brief definition matters in SaaS product development. It sets the standard for alignment at the moment when a project is still cheap to shape and easy to derail.

Many PMs do not need another template. They need a better way to test whether a brief can survive first contact with execution. I usually start with one simple exercise: hand the brief to design, engineering, and leadership separately, then ask each person to explain the user problem, the primary metric, and the main constraint. If the answers drift, the project will drift too.

Start there.

Take the checklist from this article and score the last brief your team used. Find the largest gap. Maybe the metric was fuzzy. Maybe the constraint sat halfway down the page where nobody treated it as binding. Maybe the problem statement subtly included a preferred solution.

Fix that one weakness before the next kickoff. Better launches rarely begin with more documentation. They begin with sharper alignment.

If your team wants to turn briefs, product context, and design execution into one connected workflow, Figr is worth a look. It helps product teams ground decisions in the live product, generate the artifacts that usually slow kickoff, and move from vague intent to production-ready UX with less drift between teams.