It's 4:47 PM on Thursday. Your VP just asked for something visual to anchor tomorrow's board discussion. You have a PRD. You have bullet points. You have 16 hours and no designer availability.

This moment, this pressure to make an idea tangible, is where most products go wrong. We default to building everything, hoping a masterpiece emerges from the chaos. We ship a Swiss Army knife when the user just needed a single, sharp blade.

An agile minimum viable product (MVP) is not a cheaper version of your final product. It's a strategic scalpel. It is the smallest, sharpest experiment you can run to prove your core idea has a pulse before you bet the company on it.

The 4 PM Trap and the Escape Hatch

That daily pressure to build everything for everyone? I call it the 4 PM Trap. It’s a gravity well that pulls products toward bloat and delay, a slow drift into irrelevance. It feels productive, but it’s just motion.

An agile MVP is your escape hatch.

The whole point is to stop acting like a factory delivering features and start acting like a lab running an experiment. Time isn't a conveyor belt pushing features forward; it's a switchboard for connecting hypotheses to evidence. This changes the goal from "shipping V1" to "answering our most critical business question as fast as humanly possible."

From Wish List to Focused Experiment

I once watched a product leader present a six-month roadmap for a new analytics dashboard. It was comprehensive, detailed, and utterly disconnected from what the engineering team could actually build. The real problem? They were trying to build the entire courthouse before they had even identified a single suspect.

The agile MVP works more like a detective. You don’t prove the whole conspiracy on day one. You find just enough evidence, a single viable feature, to confirm your primary suspect, the core user problem. You use that initial validation to guide the next move.

This is what I mean.

This process reframes a messy problem into a focused outcome.

Why This Approach Wins

This isn't a philosophical preference; it’s a market reality. According to the Standish Group's CHAOS report, agile projects have a significantly higher success rate than traditional waterfall models. Why? Short, feedback-driven cycles are simply better aligned with how innovation actually works.

To get this right, understanding core agile software development best practices is non-negotiable. It’s what keeps you from ending up with a broken product development cycle.

The economic incentive is simple: you spend less time and money building the wrong thing. An agile MVP forces conversations away from gut feelings toward actual evidence. It creates a system where every feature must justify its existence. Does it solve the core problem? Will people actually use it?

The goal of an MVP is to start the process of learning, not end it. It’s the first step in a conversation with your users, spoken in the language of working software.

In short, the agile MVP is a discipline. It’s the structured process of saying "no" to almost everything so you can say a powerful "yes" to the one thing that truly matters now. Your next move isn’t to build a bigger roadmap. It’s to define the smallest possible experiment that tells you if you're even on the right track.

Framing Your Hypothesis Before Writing Code

An agile MVP without a clear hypothesis isn't a smart strategy, it's just a cheap product. It becomes an exercise in cutting features for speed, not a focused experiment for learning.

Before a single line of code gets written, you have to frame exactly what you need to learn.

The basic gist is this: your MVP is an experiment. Your hypothesis is the theory you’re testing, and your users are the lab. Vague goals like “increase engagement” are useless here. They offer no clear pass or fail state. What you need is a sharp, falsifiable statement that forces a clear yes or no answer.

Just last week, a PM spent three full days in a conference room debating feature scope. The team argued about button placement and API integrations. Late Wednesday afternoon, the lead engineer finally asked, "What question are we actually trying to answer with this release?"

Silence.

They had jumped straight to building solutions without ever agreeing on the problem. Defining the hypothesis first would have made every one of those decisions exponentially simpler.

The Anatomy of a Powerful Hypothesis

A strong hypothesis isn't a guess. It’s a structured belief about cause and effect. It connects a specific feature to a specific user outcome, measured by a specific metric.

Think of it as a formula: We believe that by [building this specific feature] for [this specific user segment], we will achieve [this specific, measurable outcome].

Let’s see this in practice. A goal like "help founders manage their finances better" is a nice idea, but it’s not a testable plan.

Here’s the actionable version: “We believe that by providing a one-click runway forecasting tool for early-stage startup founders, we will see a 15% increase in weekly active users within our finance dashboard over the next six weeks.”

This is what I mean by sharp:

- The feature is specific: A one-click runway forecasting tool.

- The audience is defined: Early-stage startup founders.

- The outcome is measurable: A 15% lift in weekly active users.

- The timeframe is set: Six weeks.

Now the entire team has a north star. Designers know who they’re designing for. Engineers know what success looks like. The focus is undeniable, as seen in this example artifact for a runway forecasting UI.

Moving from Belief to Evidence

This framework isn't just about better planning, it’s a system designed to replace opinions with evidence. It turns your development process into a learning engine. The goal is risk reduction, not just shipping code.

Why does this matter so much?

Companies that operate on assumptions build bloated products. They add features based on who yells the loudest. Teams that operate on hypotheses build lean, purposeful products. Every feature has to earn its place by providing validated learning. This is the essence of agility. You can learn more about how to validate features before writing a single line of code and de-risk your roadmap. A great first step is often validating ideas with a simple landing page.

Your hypothesis is the ultimate prioritization tool. If a proposed feature, meeting, or design tweak doesn't directly serve the goal of testing your core hypothesis, it's a distraction. You can safely ignore it.

With a sharp, focused hypothesis in hand, the next step is to translate it into an equally focused set of features. It’s time to find the one critical user journey that will give you the answer you need.

From Backlog Chaos to a Viable Feature Set

Your backlog has 200 items. It's a beautiful, well-intentioned list. The problem is that only three of those items actually matter for your agile MVP right now.

Which ones will prove or disprove your hypothesis?

This is the moment where ruthless prioritization separates the teams that ship from the teams that just plan. The goal is to move from a sprawling wish list to a single, critical user journey. Think of it like this: you’re packing a survival kit for a 24-hour hike. You take water, a map, and a light source. You do not take the entire camping store. Your backlog is the store; your MVP is the kit.

Everything else is dead weight.

Finding the Critical Path

A friend at a fintech startup once told me about their “infinity backlog.” It was a dumping ground for sales requests and CEO ideas. When it came time to build an MVP, they didn't look at the list.

Instead, they asked one question: "What is the absolute shortest path a user can take to experience the core value of our hypothesis?"

That single question cut their scope by 90%.

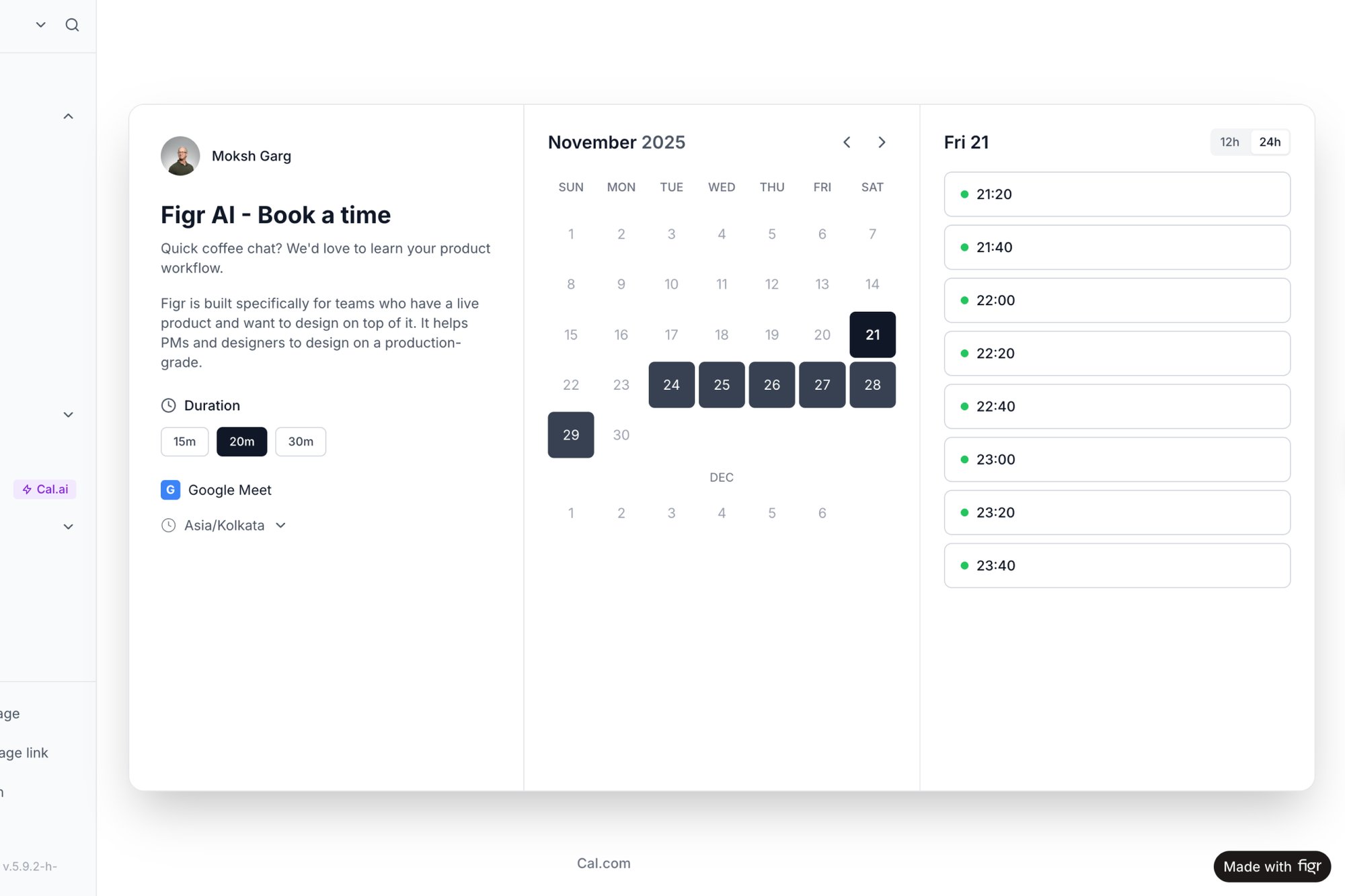

They were building a scheduling tool. So instead of worrying about calendar syncs or recurring events, they focused only on the user flow for creating an event and sharing a link. Could a user successfully book a time? That’s it. That was the test. This is the essence of defining “minimum” and “viable.” You aren’t shipping a broken product. You’re shipping a complete, tiny solution to a single, critical problem. A real-world example of this analysis is breaking down a setup analysis for Cal.com into its core components.

Mapping Value Against Effort

Once you have that critical user journey in mind, a simple value vs. effort matrix is your best friend. Plot every feature on two axes: how much value it delivers in that journey, and how much effort it will take to build.

Your MVP lives in the top-left quadrant.

- High Value, Low Effort: These are your MVP features.

- High Value, High Effort: Important, but too costly for the initial test.

- Low Value, Low Effort: Tempting distractions from the core test.

- Low Value, High Effort: Avoid these entirely.

Our guide on how to prioritize your product backlog goes deeper on this matrix approach.

The Economic Reality of Prioritization

This isn't just a process preference, it's about survival. A staggering number of product launches miss expectations, often because they were bloated with features nobody asked for. You can learn more about these MVP frameworks and why some succeed where others fail.

This zoom-out moment reveals the real market incentives. At scale, companies that ship focused value win. Every bloated release is a tax on future development, a drag on your ability to respond to what you learn. The economic pressure is to learn faster, not build more.

An agile minimum viable product is an act of strategic subtraction. You are not deciding what to build. You are deciding what to learn first, and then building only what is necessary for that lesson.

Your backlog isn't a to-do list; it's a collection of unproven hypotheses. Your job is to find the most important one and design the cheapest experiment to test it. That experiment is your MVP feature set.

Creating High-Fidelity Prototypes Without the Wait

It’s 10:00 AM on a Tuesday. Your engineer just sent a Slack message: "What happens if the user’s network drops mid-upload?" You have a high-level user story, but you don’t have an answer.

This is the exact moment where "viable" can feel cheap or broken. But it doesn't have to be.

There's a dangerous myth that an agile MVP must be ugly. The goal is to test a real experience, not just a vague idea. To get authentic feedback, you need a prototype that feels real enough to trigger real human behavior. The trick is to create that realism without burning weeks on pixel-perfect mockups.

The process isn't about being slow and perfect. It's about being fast and clear.

The Prototype as a Conversation Starter

Think of a high-fidelity prototype as a conversation, not a contract. Its only job is to make an abstract idea tangible so you can ask better questions. Does this flow make sense? Do you understand what this button does? Where did you get stuck?

Wireframes are fine for internal discussions. They are terrible for testing a solution with actual users. Why? Because users don't live in a world of grey boxes. They react to the unfinished artifact, not the core value proposition. A high-fidelity prototype skips past that noise and lets you test the actual experience. You can learn more about moving from a PRD to a functional prototype in our guide to accelerating your shipping workflow.

Borrowing Reality to Build Faster

The secret to speed isn't about cutting corners; it's about eliminating guesswork. Instead of starting from a blank canvas, you ground your design in what already exists.

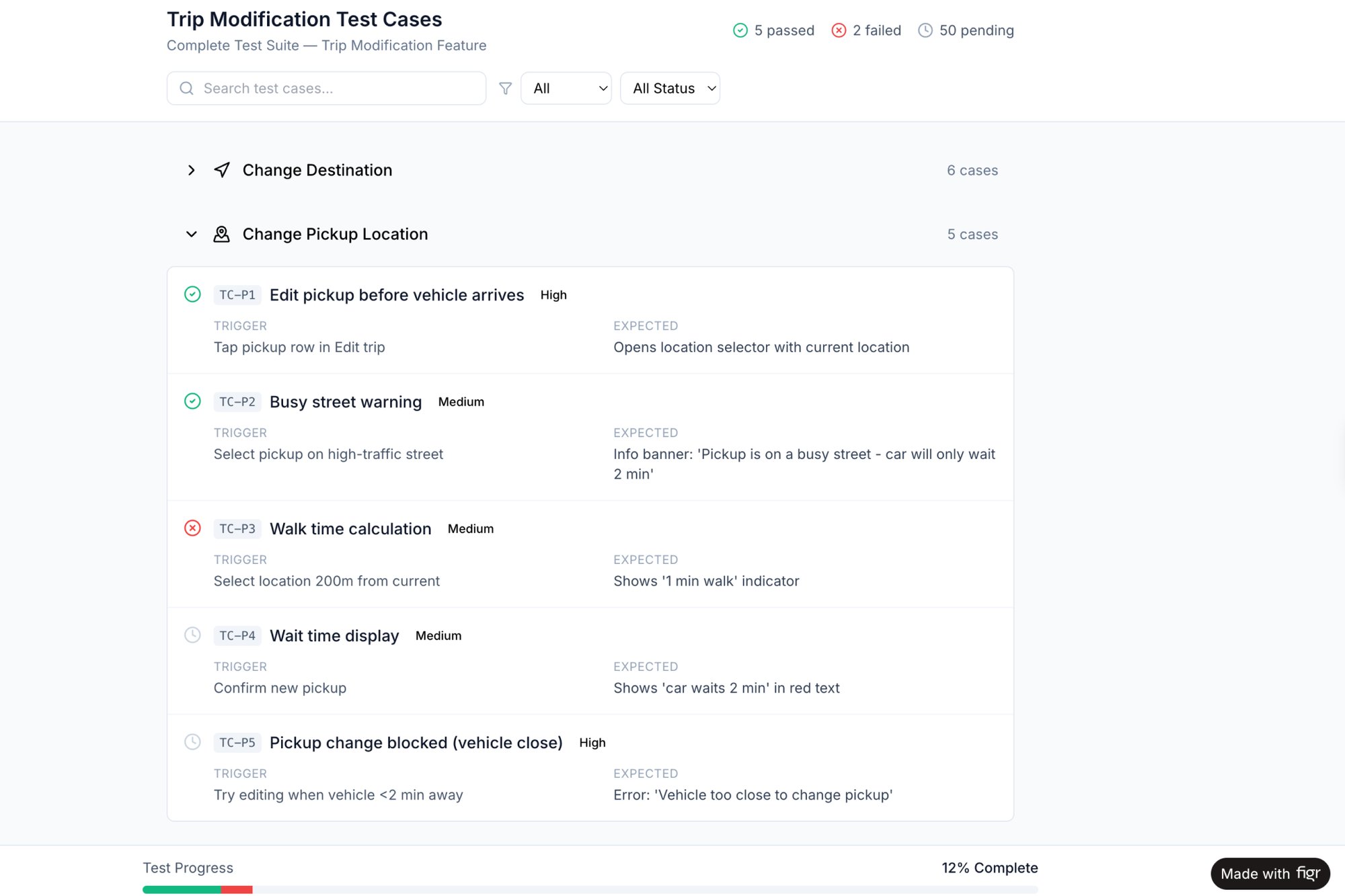

Here’s what I mean. Instead of guessing what an upload failure state should look like, you can analyze proven patterns. You could, for instance, explore a detailed breakdown of how Dropbox handles various upload failures and apply that battle-tested logic to your own product. This is much faster than debating from scratch. Curious readers can also see how we generated test cases for a Waymo trip modification, turning a complex scenario into a concrete plan.

Why reinvent the wheel when you can learn from a system that serves millions?

Your first prototype shouldn't feel like a guess. It should feel like an informed next step, built on the shoulders of established design patterns and your own product's DNA.

By using your existing product as the foundation, you ensure consistency and slash the time it takes to create something authentic.

Documenting the "What Ifs" Before They Derail a Sprint

High-fidelity prototypes aren't just for user testing. They are critical communication tools for your engineering team. A clear, interactive prototype answers dozens of questions before they're asked.

But a prototype alone is not enough for an agile minimum viable product.

You also have to map the less-than-happy paths. What happens when the API returns an error, the form is empty, or a third-party integration is down? These edge cases can turn a two-week sprint into a six-week slog. Mapping them isn’t gold-plating; it’s de-risking the entire project.

When you hand engineering a prototype alongside a map of potential failure states, you are handing them a complete package. This is the difference between a vague idea and a buildable spec. A well-crafted Product Requirements Document, supported by interactive flows, gives your team everything it needs. For instance, a detailed PRD for a new Spotify AI feature shows exactly what this looks like in practice.

It's all about providing clarity, not just pictures. This is how you build the right thing, right from the start.

Why Launching Your MVP Is Day Zero

The button is pushed. The code is live. Your team celebrates in Slack. It feels like the finish line, a moment of relief after weeks of focused effort.

But shipping your agile minimum viable product is not the victory lap.

It’s the starting gun.

Launching is Day Zero. Everything before this moment was just preparation, a well-researched guess. Now, the real work begins: the disciplined process of listening to what the market is telling you through the language of data and behavior.

From Harbor to Open Water

Think of your MVP launch as a ship leaving the harbor. You have a destination plotted on a map, your hypothesis, but you have no idea if the compass is calibrated. The real journey is a series of constant, tiny adjustments to the rudder based on instrument readings.

The data.

Your job as a product leader is no longer to build the ship. It’s to captain it. This means moving beyond vanity metrics like sign-ups. Those are the waves slapping against the hull; they tell you you’re moving, but not where you’re going. You need behavioral metrics, the deep currents that prove or disprove your core assumption.

For example, a team I know launched a new project management feature. They got a thousand sign-ups in the first week. The VP was thrilled. But the product manager was worried. Why? The data showed that while many users created a project, only 2% ever invited a collaborator. Their hypothesis was about improving team productivity, but the behavior showed it was a solo tool. The core assumption was wrong.

The Build-Measure-Learn Loop in Action

This is what Eric Ries famously called the Build-Measure-Learn feedback loop in The Lean Startup. It’s the engine of product development. You built the MVP. Now you must measure its impact and learn from the results to decide what to build next.

This isn't just theory; it’s economic survival. Startups that ignore MVPs are taking a massive risk. The data is clear: MVPs are critical for raising funds, as traction speaks louder than any pitch deck. The market doesn't reward comprehensive plans; it rewards validated learning. Every day your product is live is a chance to gather more data, which is the most valuable currency you have.

The purpose of an agile MVP is to maximize the amount of validated learning for the least amount of effort. Your launch isn't the end of development; it's the start of evidence-based development.

This mindset forces you to stay lean and responsive. You don't plan a six-month roadmap after launch. You plan the next two-week sprint based on what you just learned.

Your First Week Post-Launch Checklist

So, what does this look like in practice? The first seven days are critical. Your goal is to turn initial user activity into your next set of priorities.

Here’s a grounded takeaway for your first week on the open seas:

- Talk to Five Users: Find five real users who just tried your MVP. Ask open-ended questions: What did you hope this would do? Where did you get stuck?

- Analyze the Core Funnel: Look at the one critical user path. Where is the biggest drop-off? Are users completing the main job-to-be-done? Track this single, vital flow. For instance, you could use this interactive prototype of a scheduling page to see exactly what a successful journey should look like.

- Identify One Key Insight: Don't try to solve everything. Find the single biggest discrepancy between what you expected and what users actually did. This is your most important clue.

- Plan the Next Smallest Change: Based on that one insight, what is the tiniest change you can make to address it? This isn't a redesign. It's a small rudder adjustment. That becomes the hypothesis for your next sprint.

This is about finding the signal in the noise and acting with precision. That is the true spirit of an agile minimum viable product.

Common Agile MVP Questions Answered

You’ve framed the hypothesis. You’ve shipped the code. Now the questions start. The theory of the agile minimum viable product is clean, but the practice gets messy.

Let's clear up some common points of confusion. Think of this as a set of guardrails to keep your experiment on the tracks.

How "Minimum" Should My Agile MVP Be?

Your MVP needs to be minimal enough to ship quickly but viable enough to solve one core problem for one specific user. That's it. Can a real person complete the primary job-to-be-done and give you meaningful feedback? That is the real test.

Forget the number of features. It’s about the completeness of a single, critical user journey. A user should feel they have a simple, sharp tool in their hands, not a broken one.

What Is the Difference Between a Prototype and an MVP?

A prototype is a ghost. It’s a simulation you use to test a concept before code is written. It asks a simple question: Can users understand this idea? A great prototype, like this interactive scheduling page, is perfect for testing usability and flow.

An agile MVP is a real, functional product released to the world. It asks a much harder question: Will users actually use this to solve their problem? A prototype tests comprehension; an MVP tests market value.

How Do I Handle Stakeholder Pressure for More Features?

Your most powerful shield is the hypothesis you defined at the start. You have to frame every discussion around learning, not just building. When a stakeholder requests a new feature, don’t just say no.

Instead, ask a question.

"That's an interesting idea. How would that specific feature help us validate our core assumption that users need X to solve Y?"

This reframes the entire conversation. It shifts from a battle of opinions to a collaborative search for evidence. The discussion moves from "what we could build" to "what we must learn."

Is an MVP Only for New Products?

Absolutely not. This is probably one of the biggest misconceptions. The agile MVP mindset is just as powerful for established products as it is for new ventures.

Instead of launching a massive, high-risk redesign, you can release a small, iterative improvement to a tiny subset of your users. For example, rather than overhauling your entire settings page, you could test a new workflow on just 5% of your user base. This lets you validate a design change with minimal risk before committing to a full rollout. It's a method for de-risking any significant change, for a product on day one or day one thousand.

An agile MVP isn’t a one-time launch; it's a continuous cycle of inquiry. To keep that cycle moving, your team needs tools that accelerate learning by grounding every decision in real product context. Figr helps you move from a vague idea to a high-fidelity prototype, complete with user flows and edge cases, so you can test your hypotheses faster and ship with confidence. Learn more at https://figr.design.