Monday morning, the sprint board looks healthy. Tickets are groomed. Designs are approved. Engineering starts building. Then a developer drops a message in Slack that seems harmless: “What happens if the user has no saved payment method but arrives here from the upsell modal?”

Everything stops for that question.

The designer opens Figma. The PM opens the spec. Engineering opens the existing flow in the product. Nobody finds a clean answer. A tiny ambiguity becomes a meeting, then another, then a string of workarounds that nobody feels good about. By the end of the week, the feature still ships, but slower, messier, and with less confidence than anyone expected.

That moment is where design quality assurance starts to matter.

The Cost of a Single Question

A single unresolved question rarely stays single. It spreads through the workflow. Engineering needs clarification. QA needs expected behavior. Support will eventually need an explanation. If the feature ships with the ambiguity intact, customers become the final reviewers.

I watched a PM at a Series C SaaS company deal with exactly this. The feature looked polished in review. The problem wasn't visual quality. The problem was that the design left too much unsaid: edge cases, field states, permission rules, and what should happen when real product constraints collided with the ideal flow.

The invisible tax on shipping

Many organizations treat this as normal friction. It is not. It's a hidden operating cost.

When design artifacts leave room for interpretation, teams pay in familiar ways:

- More decision latency: engineers wait instead of building

- More coordination overhead: PM, design, and QA have to reconstruct intent

- More rework: implemented behavior drifts from the original user experience

- More escape risk: unresolved details show up as production bugs, support tickets, or awkward patches

The most expensive design flaw isn't the one that looks bad. It's the one that forces the organization to improvise downstream.

This is why traditional conversations about quality often miss the point. Existing literature on design QA tends to focus on documentation and compliance, but rarely quantifies the business impact on production defects or revision cycles, a gap noted in DOE guidance on QA in design.

A better lens for the problem

The basic gist is this: design quality assurance is not a design-polishing exercise. It's a system for preventing ambiguity from turning into delivery drag.

That changes how you manage it.

Instead of asking whether a design is “done,” ask whether it is buildable, testable, traceable, and resilient under real conditions. That's a tougher bar. It's also the bar that protects velocity.

Because the cost of a design decision isn't set when a designer makes it.

It's set when the rest of the team has to interpret it.

What Is Design Quality Assurance

Design quality assurance is the discipline of verifying that a design can survive contact with reality before code is written. It sits between design intent and implementation. Its job is to make sure the design is clear enough for engineering, complete enough for QA, and grounded enough for the product to behave as expected.

It is not the same as a design critique. A critique asks whether the work is elegant, coherent, and aligned with user goals. Useful, yes. But that isn't enough.

It is also not the same as functional QA. Functional QA tests working software. Design quality assurance checks whether the specification itself is strong enough to produce good software in the first place.

Blueprint, not mood board

The closest analogy is construction. An architect can draw a beautiful building. A structural engineer then checks whether that building can stand. Product teams need the same split of responsibility.

A design file can be visually persuasive and still fail as a specification.

That's where the V-Model is useful. It treats quality as a chain of traceability between requirements, design, implementation, and validation. In that model, technical reviews are not optional ceremony. They exist to catch conflicts and omissions early, and defects found during design review cost 10 to 100 times less to remediate than defects found after launch, as explained in StickyMinds' discussion of design specification and QA.

What good DQA actually checks

A practical DQA pass usually verifies a few things:

- Intent is traceable: every key screen or flow ties back to a requirement or product decision

- States are explicit: loading, empty, error, permission, and edge conditions aren't implied

- Behavior is feasible: the solution fits the current architecture, data model, and component set

- Handoff is testable: QA can derive scenarios without guessing what “should” happen

This is also why weak product requirements poison downstream quality. If the PRD is fuzzy, the design will absorb that ambiguity and pass it on. Teams that want better design assurance usually need stronger inputs first. That's where Tekk.coach AI PRD insights can help, because they sharpen the requirement layer before design turns it into workflow and UI.

For teams building a repeatable operating model, the Figr quality improvement framework is a useful companion read because it treats quality as a continuous system, not a final gate.

Practical rule: if engineering has to infer behavior from visuals alone, the design is not ready.

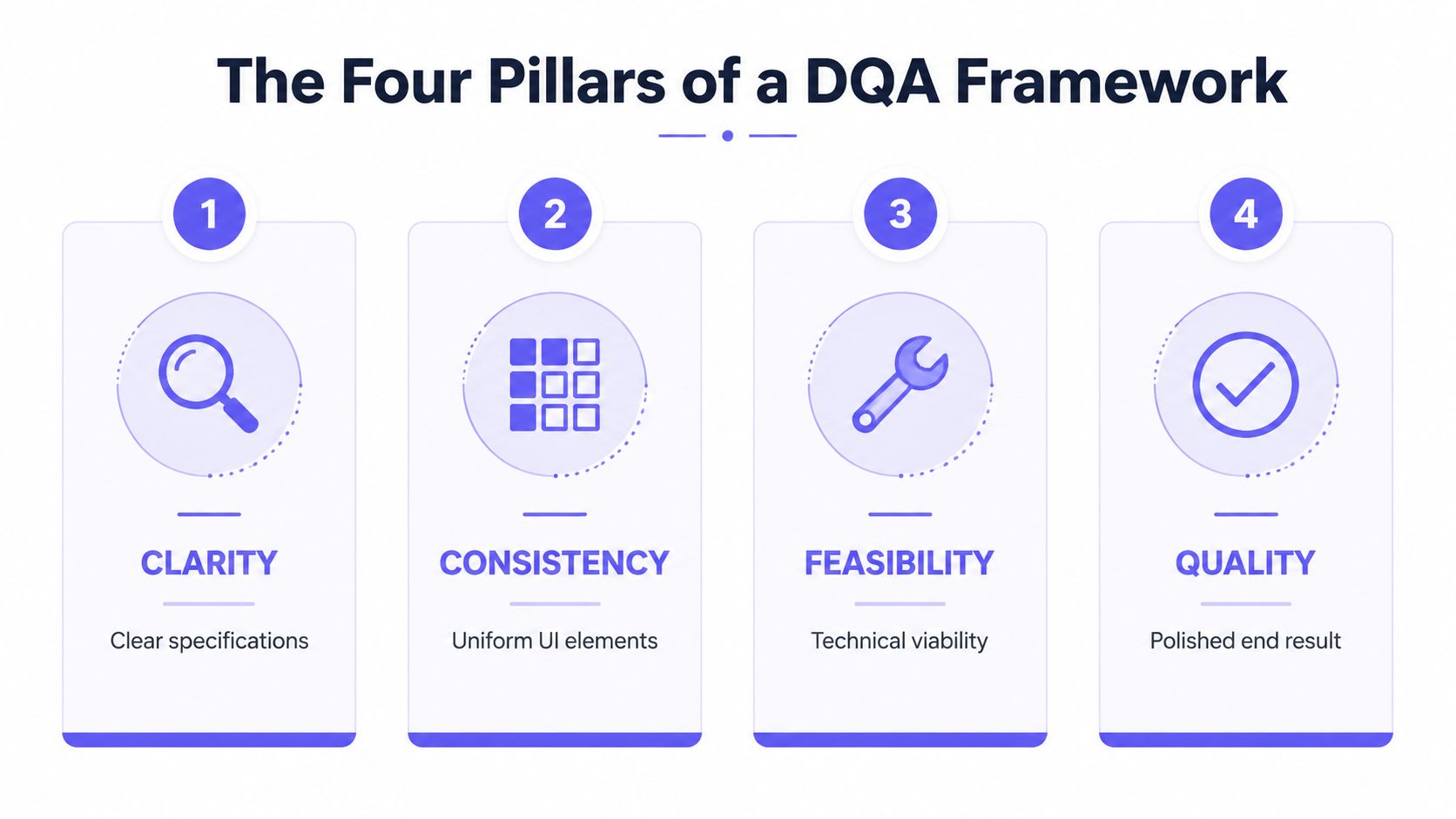

The Four Pillars of a DQA Framework

Designers often require a framework simple enough to use under deadline pressure. The one that works best in practice has four pillars: clarity, consistency, feasibility, and completeness.

These pillars are memorable because they mirror how quality fails in the wild. Designs usually don't break because nobody cared. They break because one of these four conditions wasn't strong enough.

Clarity and consistency

Clarity asks whether another team can execute the design without interpretation. Are user actions explicit? Are transitions specified? Does every field, message, and state say what it needs to say?

A good test is simple: hand the design to an engineer who wasn't in the design review. What questions do they ask in the first five minutes? Those questions are your quality gaps.

Consistency checks whether the feature behaves like the rest of the product. Does it use established patterns, component rules, and token logic? Or does it invent a new pattern that creates future maintenance debt?

If your system already has standards, this pillar should be unforgiving. Reinvented UI patterns usually feel fast in the moment and expensive later. That's why teams that are serious about system quality spend time mastering design tokens and components, not just curating pretty libraries.

Feasibility and completeness

Feasibility is where design stops being abstract. Can this be built with the current frontend architecture? Does the data exist? Are there permissions, APIs, or legacy constraints that make the design misleadingly optimistic?

I have seen attractive flows fail here more often than anywhere else. A design can “work” in Figma and still collapse when it meets real product constraints.

Completeness covers everything teams skip because the happy path looks finished. Empty states. Error states. Accessibility considerations. Localization implications. What the user sees when the system is slow, blocked, or partially configured.

If the happy path is polished but the failure path is undefined, the team hasn't finished design. It has postponed quality.

This framework also aligns with core ideas from data QA. Data quality assurance emphasizes accuracy, completeness, consistency, and validity, and prevention-based checks such as token validation and automated pattern matching can reduce manual QA effort by 60 to 80 percent, according to 6Sigma's overview of data quality assurance.

A quick pillar check

Use these prompts in review:

- For clarity: What could two engineers implement differently from the same design?

- For consistency: Where does this feature diverge from our existing component or interaction rules?

- For feasibility: What part of this solution depends on data, logic, or infrastructure we haven't confirmed?

- For completeness: What happens when the ideal path fails?

That's the system. Not a checklist for its own sake, but a way to catch uncertainty before it spreads.

Measuring What Matters in Design Quality

If design quality assurance only lives in opinions, it won't survive budget pressure. Leaders fund what they can see. Teams improve what they can measure.

The mistake is to measure only after code exists. By then, you've already absorbed the cost. Better design quality measurement connects design decisions to delivery outcomes: fewer clarifications, less rework, better defect closure, and steadier shipping.

Start with operational signals

One metric is especially useful because it connects quality discipline to release health: defect resolution percentage, calculated as (Total defects fixed / Total defects reported) × 100. Top teams resolve 90 to 95 percent of defects within a cycle, while low rates correlate with 20 to 30 percent shipping delays. Tracking this over time can reduce overall defect density by 40 percent, based on Tricentis' QA metrics analysis.

That metric belongs in a DQA conversation because unresolved downstream defects often begin upstream, in ambiguous specs, missing states, and weak handoffs.

A friend who runs product ops once told me their team stopped arguing about “design quality” the day they mapped design review misses to sprint spillover. Suddenly the conversation moved from taste to throughput.

Key Design Quality Assurance metrics

| Metric | Description | Goal |

|---|---|---|

| Defect Resolution Percentage | Share of reported defects fixed within the cycle | Move toward the range top teams achieve within a cycle |

| Design Clarification Count | Number of engineering or QA questions raised because the design or spec was unclear | Reduce over time as handoffs improve |

| Reworked Story Percentage | Share of stories sent back for redesign or major clarification after development starts | Keep trending down |

| Edge Case Coverage | Whether critical empty, error, and permission states are defined before build begins | Make coverage explicit before sprint commitment |

| Traceability Coverage | Portion of design outputs tied to clear requirements and expected behavior | Improve requirement-to-design mapping |

Notice the pattern. Only one row here has a hard benchmark from published QA data. The rest are custom operating metrics. That's okay. Not every important metric needs an industry benchmark. It needs a clear definition and consistent use.

Score quality like a team sport

The discipline matters more than the formula. Define each metric once. Review it on a cadence. Decide what action follows when it moves in the wrong direction.

For teams already running goal systems, The OKR Hub's scoring advice is useful because it forces a hard question: are you measuring activity, or are you measuring an actual outcome? DQA should live in the second category.

A helpful companion is to understand UX metrics and their impact, especially if you're trying to connect design quality to adoption, friction, or retention without collapsing everything into one vague “experience” score.

What to watch: when clarification count falls but rework stays high, your issue probably isn't documentation volume. It's missing feasibility checks.

Putting DQA into Practice

Teams usually fail at design quality assurance in one of two ways. They either skip it entirely because speed feels urgent, or they turn it into a heavyweight review process that nobody wants to attend.

The middle path works better. A small, disciplined ritual before sprint commitment catches a surprising amount of risk.

Who should be in the room

Keep the group tight:

- PM: owns the requirement logic and business trade-offs

- Design lead: owns interaction intent, states, and system alignment

- Engineering lead: owns implementation constraints and technical risk

You can invite QA when the feature is especially sensitive, but the core trio usually gains the most effectiveness. Too many attendees turn the review into passive observation.

What the meeting should produce

The output isn't approval theater. It is a short list of resolved risks and open questions.

A lightweight review agenda works well:

- Restate the user outcome so everyone is checking the same problem

- Walk the primary flow from trigger to completion

- Stress the non-ideal paths including empty, error, partial, and permission states

- Confirm implementation realism against current components, data, and logic

- Assign follow-ups for anything unclear before sprint commitment

This doesn't need to become a big process artifact. A shared checklist is enough if the team uses it.

The checklist I would use

A practical DQA checklist often includes prompts like these:

- Clarity: Can engineering build this without asking what happens next?

- Consistency: Does this use approved components, token rules, and established patterns?

- Feasibility: Have we validated data needs, API assumptions, and edge logic?

- Completeness: Did we define loading, empty, error, disabled, and access-restricted states?

- Testability: Can QA derive acceptance scenarios directly from the design and spec?

Last week I watched a PM use a version of this in a pre-sprint review. The meeting surfaced three issues quickly: a missing permission state, an error path that contradicted the PRD, and a component choice that engineering couldn't support cleanly. None of those issues were dramatic. All of them would have become expensive if they surfaced after implementation started.

A good DQA meeting feels almost boring. That's a sign the team is removing drama before it reaches delivery.

What doesn't work

A few habits consistently fail:

- Late review: if code has started, people defend sunk effort instead of fixing the design

- Design-only review: without PM and engineering, quality gets judged in a vacuum

- Checklist without ownership: unanswered questions linger and come back during development

- Treating DQA as a gate: the goal is risk removal, not bureaucratic approval

The zoom-out matters here. Teams say they want speed, but many workflows reward local speed over system speed. Design finishes quickly. Engineering starts quickly. Then the organization pays for ambiguity in slower delivery, more meetings, and preventable revisions. DQA fixes that by shifting effort to the cheapest point in the pipeline.

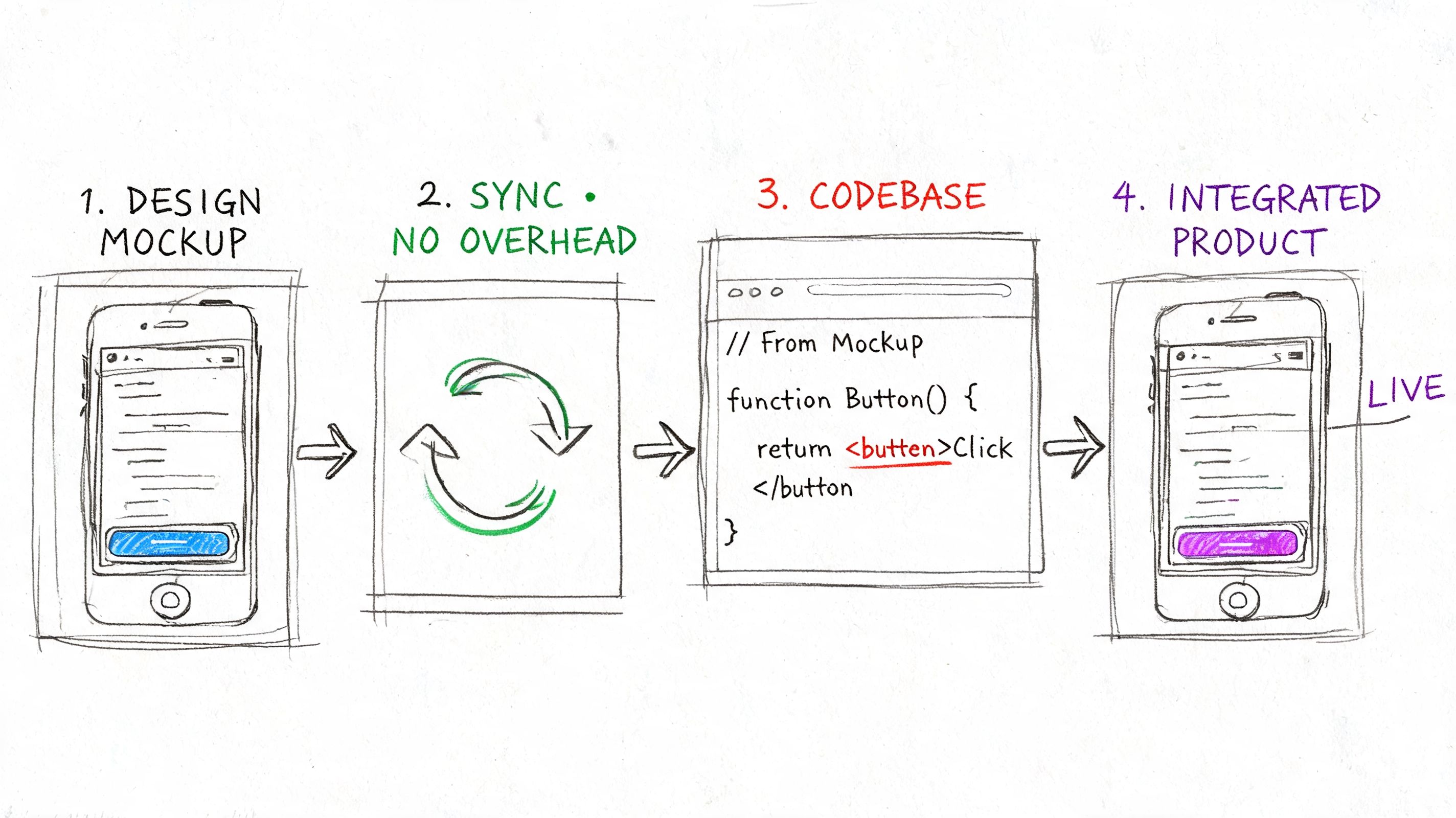

The Role of Tooling and Automation in DQA

Manual review catches a lot, but manual review alone doesn't scale. Once a team has multiple squads, a growing design system, and constant release pressure, quality starts leaking through the seams unless tools carry part of the load.

The smartest use of automation is not replacing judgment. It's removing repetitive checking so humans can spend their attention on trade-offs.

Where tools help most

Different tools support different pillars of design quality assurance:

- Consistency tools: Figma linters, token validators, and component usage checks catch system drift early

- Completeness tools: accessibility scanners and content checks flag missing labels, contrast problems, and structural issues

- Feasibility tools: visual regression workflows in Storybook ecosystems, Chromatic, and Percy expose implementation drift after handoff

- Clarity tools: spec generators, flow mappers, and test-case generation help translate design into testable behavior

This is also where teams should borrow from engineering test strategy. Good automation isn't just “more tests.” It is better placement of checks, clearer failure signals, and maintenance discipline. If you're refining that layer, optimizing test automation is a worthwhile read because the same logic applies to product UI quality.

AI changes the shape of review

The more interesting shift is what AI can do upstream. Bayesian methods in engineering reduce development cycles by 20 to 30 percent by integrating prior knowledge with new information, and that logic can be emulated by AI systems that analyze large pattern sets, including 200,000+ screens, to de-risk decisions and predict likely quality outcomes, as described in ASQ's article on assessing design quality during product development.

That matters because most design reviews still rely on whatever the room happens to remember. Pattern memory is weak. Teams forget edge cases. They miss regressions against the live product. They overestimate how novel a “new” solution really is.

A tool can help by comparing proposed work against actual product context, known patterns, and likely failure modes before handoff.

One option in that category is Figr, which can analyze existing product context, import design systems and tokens, generate edge cases and test cases from designs, and support reviews grounded in the current app rather than a blank canvas. If your team is moving toward automated verification between design intent and shipped UI, Figr's guide to UI automated testing is a practical next read.

A short demo helps make this tangible:

What automation should not do

Automation should not become a substitute for product judgment.

Use it to answer questions like:

- Did we violate system rules?

- Did we miss obvious states or accessibility checks?

- Does the implementation still match the intended UI?

- Are there known patterns from similar screens we should consider?

Don't use it to outsource decisions about user value, business trade-offs, or whether the team is solving the right problem. Those remain human work.

The payoff is simple. Better tools let you move quality left without adding ritual. That is how design quality assurance becomes an advantage instead of overhead.

Your First Step to Better Quality

Teams rarely need a quality transformation plan. They need one better habit.

For your next feature, schedule a 30-minute review before the sprint starts. Bring the PM, the designer, and the engineering lead. Put the design on screen. Ask one question first: what's unclear? Then keep going until you've covered consistency, feasibility, completeness, and testability.

That's enough to change the shape of delivery.

If you need a starting point for the review itself, adapt a simple checklist from a broader UX audit framework for product managers. The point isn't to create another ceremony. It's to stop shipping ambiguity into engineering and calling the resulting churn “normal.”

Design quality assurance works because it changes where the team pays for thinking.

Pay earlier, and you pay less.

If you're trying to make design quality assurance operational, not just aspirational, Figr helps product teams turn live product context, design systems, edge cases, and QA artifacts into production-ready design work before handoff.